ProxySQL Native Support for Percona XtraDB Cluster (PXC)

ProxySQL in its versions up to 1.x did not natively support Percona XtraDB Cluster (PXC). Instead, it relied on the flexibility offered by the scheduler. This approach allowed users to implement their own preferred way to manage the ProxySQL behaviour in relation to the Galera events. From version 2.0 we can use native ProxySQL support for PXC.. The mechanism to activate native support is very similar to the one already in place for group replication. In brief it is based on the table [runtime_]mysql_galera_hostgroups and the information needed is mostly the same:

- writer_hostgroup: the hostgroup ID that refers to the WRITER

- backup_writer_hostgroup: the hostgoup ID referring to the Hostgorup that will contain the candidate servers

- reader_hostgroup: The reader Hostgroup ID, containing the list of servers that need to be taken in consideration

- offline_hostgroup: The Hostgroup ID that will eventually contain the writer that will be put OFFLINE

- active: True[1]/False[0] if this configuration needs to be used or not

- max_writers: This will contain the MAX number of writers you want to have at the same time. In a sane setup this should be always 1, but if you want to have multiple writers, you can define it up to the number of nodes.

- writer_is_also_reader: If true [1] the Writer will NOT be removed from the reader HG

- max_transactions_behind: The number of wsrep_local_recv_queue after which the node will be set OFFLINE. This must be carefully set, observing the node behaviour.

- comment: I suggest to put some meaningful notes to identify what is what.

Given the above let us see what we need to do in order to have a working galera native solution. I will have three Servers:

192.168.1.205 (Node1) 192.168.1.21 (Node2) 192.168.1.231 (node3)

As set of Hostgroup, I will have:

Writer HG-> 100 Reader HG-> 101 BackupW HG-> 102 offHG HG-> 9101

To set it up

Servers first: INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.205',101,3306,1000); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.21',101,3306,1000); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.231',101,3306,1000);

Then the galera settings: insert into mysql_galera_hostgroups (writer_hostgroup,backup_writer_hostgroup,reader_hostgroup, offline_hostgroup,active,max_writers,writer_is_also_reader,max_transactions_behind) values (100,102,101,9101,0,1,1,16);

As usual if we want to have R/W split we need to define the rules for it: insert into mysql_query_rules (rule_id,proxy_port,schemaname,username,destination_hostgroup,active,retries,match_digest,apply) values(1040,6033,'windmills','app_test',100,1,3,'^SELECT.*FOR UPDATE',1); insert into mysql_query_rules (rule_id,proxy_port,schemaname,username,destination_hostgroup,active,retries,match_digest,apply) values(1041,6033,'windmills','app_test',101,1,3,'^SELECT.*@@',1);

save mysql query rules to disk; load mysql query rules to run;

Then another important variable... the server version, please do yourself a good service ad NEVER use the default. update global_variables set variable_value='5.7.0' where variable_name='mysql-server_version'; LOAD MYSQL VARIABLES TO RUNTIME;SAVE MYSQL VARIABLES TO DISK;

Finally activate the whole thing: save mysql servers to disk; load mysql servers to runtime;

One thing to note before we go ahead. In the list of servers I had:

- Filled only the READER HG

- Used the same weight

This because of the election mechanism ProxySQL will use to identify the writer, and the (many) problems that may be attached to it.

For now let us go ahead and see what happens when I load this information to runtime. Before running the above commands:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

+--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | weight | hostgroup | srv_host | srv_port | STATUS | ConnUsed | ConnFree | ConnOK | ConnERR | MaxConnUsed | Queries | Queries_GTID_sync | Bytes_data_sent | Bytes_data_recv | Latency_us | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ After: +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | weight | hostgroup | srv_host | srv_port | STATUS | ConnUsed | ConnFree | ConnOK | ConnERR | MaxConnUsed | Queries | Queries_GTID_sync | Bytes_data_sent | Bytes_data_recv | Latency_us | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | 1000 | 100 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 501 | | 1000 | 101 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 501 | | 1000 | 101 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 546 | | 1000 | 101 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 467 | | 1000 | 102 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 546 | | 1000 | 102 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 467 | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ |

1 2 3 4 5 6 7 8 9 10 11 12 |

mysql> SELECT * FROM runtime_mysql_galera_hostgroups \G *************************** 1. row *************************** writer_hostgroup: 100 backup_writer_hostgroup: 102 reader_hostgroup: 101 offline_hostgroup: 9101 active: 0 <----------- note this max_writers: 1 writer_is_also_reader: 1 max_transactions_behind: 16 comment: NULL 1 row IN SET (0.01 sec) |

As we can see, ProxySQL had taken the nodes from my READER group and distribute them adding node 1 in the writer and node 2 as backup_writer.

But – there is a but – wasn't my rule set with Active=0? Indeed it was, and I assume this is a bug (#Issue 1902).

The other thing we should note is that ProxySQL had elected as writer node 3 (192.168.1.231).

As I said before what should we do IF we want to have a specific node as preferred writer? We need to modify its weight.

So say we want to have node 1 (192.168.1.205) as writer we will need something like this:

1 2 3 4 |

INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.205',101,3306,10000); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.21',101,3306,100); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.231',101,3306,100); |

Doing that will give us :

1 2 3 4 5 6 7 8 9 10 |

+--------+-----------+---------------+----------+--------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | weight | hostgroup | srv_host | srv_port | STATUS | ConnUsed | ConnFree | ConnOK | ConnERR | MaxConnUsed | Queries | Queries_GTID_sync | Bytes_data_sent | Bytes_data_recv | Latency_us | +--------+-----------+---------------+----------+--------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | 10000 | 100 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2209 | | 100 | 101 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 546 | | 100 | 101 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 508 | | 10000 | 101 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2209 | | 100 | 102 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 546 | | 100 | 102 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 508 | +--------+-----------+---------------+----------+--------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ |

If you noticed, given we had set the WEIGHT in node 1 higher, this node will become also the most utilized for reads.

We probably do not want that, so let us modify the reader weight.

1 |

UPDATE mysql_servers SET weight=10 WHERE hostgroup_id=101 AND hostname='192.168.1.205';

|

At this point if we trigger the failover, with set global wsrep_reject_queries=all; on node 1. ProxySQL will take action and will elect another node as writer:

1 2 3 4 5 6 7 8 9 |

+--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | weight | hostgroup | srv_host | srv_port | STATUS | ConnUsed | ConnFree | ConnOK | ConnERR | MaxConnUsed | Queries | Queries_GTID_sync | Bytes_data_sent | Bytes_data_recv | Latency_us | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | 100 | 100 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 562 | | 100 | 101 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 562 | | 100 | 101 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 588 | | 100 | 102 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 588 | | 10000 | 9101 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 468 | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ |

Node 3 (192.168.1.231) is the new writer and node 1 is in the special group for OFFLINE. Let see now what will happen IF we put back node 1.

1 2 3 4 5 6 7 8 9 10 |

+--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | weight | hostgroup | srv_host | srv_port | STATUS | ConnUsed | ConnFree | ConnOK | ConnERR | MaxConnUsed | Queries | Queries_GTID_sync | Bytes_data_sent | Bytes_data_recv | Latency_us | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | 10000 | 100 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 449 | | 100 | 101 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 532 | | 100 | 101 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 569 | | 10000 | 101 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 449 | | 100 | 102 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 532 | | 100 | 102 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 569 | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ |

Ooops the READER has come back with the HIGHEST value and as such it will be the most used node, once more. To fix it, we need to re-run the update as before.

But there is a way to avoid this? In short the answer is NO! This, in my opinion, is BAD and is worth a feature request, because this can really put a node on the knees.

Now this is not the only problem. There is another point that is probably worth discussion, which is the fact ProxySQL is currently doing FAILOVER/FAILBACK.

Failover, is obviously something we want to have, but failback is another discussion.

The point is, once the failover is complete and the cluster has redistributed the incoming requests, doing a failback is an impacting operation that can be a disruptive one too.

If all nodes are treated as equal, there is no real way to prevent it, while if YOU set a node to be the main writer, something can be done, let us see what and how. Say we have:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.205',101,3306,1000); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.21',101,3306,100); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.231',101,3306,100); +--------+-----------+---------------+----------+--------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | weight | hostgroup | srv_host | srv_port | STATUS | ConnUsed | ConnFree | ConnOK | ConnERR | MaxConnUsed | Queries | Queries_GTID_sync | Bytes_data_sent | Bytes_data_recv | Latency_us | +--------+-----------+---------------+----------+--------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | 1000 | 100 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 470 | | 100 | 101 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 558 | | 100 | 101 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 613 | | 10 | 101 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 470 | | 100 | 102 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 558 | | 100 | 102 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 613 | +--------+-----------+---------------+----------+--------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ |

Let us put the node down set global wsrep_reject_queries=all; And check:

1 2 3 4 5 6 7 8 9 |

+--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | weight | hostgroup | srv_host | srv_port | STATUS | ConnUsed | ConnFree | ConnOK | ConnERR | MaxConnUsed | Queries | Queries_GTID_sync | Bytes_data_sent | Bytes_data_recv | Latency_us | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | 100 | 100 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 519 | | 100 | 101 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 519 | | 100 | 101 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 506 | | 100 | 102 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 506 | | 1000 | 9101 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 527 | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ |

We can now manipulate the weight in the special OFFLINE group and see what happen:

1 |

UPDATE mysql_servers SET weight=10 WHERE hostgroup_id=9101 AND hostname='192.168.1.205'

|

Then I put the node up again: set global wsrep_reject_queries=none;

1 2 3 4 5 6 7 8 9 10 |

+--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | weight | hostgroup | srv_host | srv_port | STATUS | ConnUsed | ConnFree | ConnOK | ConnERR | MaxConnUsed | Queries | Queries_GTID_sync | Bytes_data_sent | Bytes_data_recv | Latency_us | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ | 100 | 100 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 537 | | 100 | 101 | 192.168.1.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 537 | | 100 | 101 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 573 | | 10 | 101 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 458 | | 100 | 102 | 192.168.1.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 573 | | 10 | 102 | 192.168.1.205 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 458 | +--------+-----------+---------------+----------+---------+----------+----------+--------+---------+-------------+---------+-------------------+-----------------+-----------------+------------+ |

That's it, the node is back but with no service interruption.

At this point we can decide if make this node reader like the others, or wait and plan a proper time of the day when we can put it back as writer, while, in the meanwhile it has a bit of load to warm its bufferpool.

The other point – and important information – is what is ProxySQL is currently checking on Galera? From reading the code Proxy will trap the following:

- read_only

- wsrep_local_recv_queue

- wsrep_desync

- wsrep_reject_queries

- wsrep_sst_donor_rejects_queries

- primary_partition

Plus the standard sanity checks on the node. Finally to monitor the whole situation we can use this:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

mysql> SELECT * FROM mysql_server_galera_log ORDER BY time_start_us DESC LIMIT 10; +---------------+------+------------------+-----------------+-------------------+-----------+------------------------+-------------------+--------------+----------------------+---------------------------------+-------+ | hostname | port | time_start_us | success_time_us | primary_partition | read_only | wsrep_local_recv_queue | wsrep_local_state | wsrep_desync | wsrep_reject_queries | wsrep_sst_donor_rejects_queries | error | +---------------+------+------------------+-----------------+-------------------+-----------+------------------------+-------------------+--------------+----------------------+---------------------------------+-------+ | 192.168.1.231 | 3306 | 1549982591661779 | 2884 | YES | NO | 0 | 4 | NO | NO | NO | NULL | | 192.168.1.21 | 3306 | 1549982591659644 | 2778 | YES | NO | 0 | 4 | NO | NO | NO | NULL | | 192.168.1.205 | 3306 | 1549982591658728 | 2794 | YES | NO | 0 | 4 | NO | YES | NO | NULL | | 192.168.1.231 | 3306 | 1549982586669233 | 2827 | YES | NO | 0 | 4 | NO | NO | NO | NULL | | 192.168.1.21 | 3306 | 1549982586663458 | 5100 | YES | NO | 0 | 4 | NO | NO | NO | NULL | | 192.168.1.205 | 3306 | 1549982586658973 | 4132 | YES | NO | 0 | 4 | NO | YES | NO | NULL | | 192.168.1.231 | 3306 | 1549982581665317 | 3084 | YES | NO | 0 | 4 | NO | NO | NO | NULL | | 192.168.1.21 | 3306 | 1549982581661261 | 3129 | YES | NO | 0 | 4 | NO | NO | NO | NULL | | 192.168.1.205 | 3306 | 1549982581658242 | 2786 | YES | NO | 0 | 4 | NO | NO | NO | NULL | | 192.168.1.231 | 3306 | 1549982576661349 | 2982 | YES | NO | 0 | 4 | NO | NO | NO | NULL | +---------------+------+------------------+-----------------+-------------------+-----------+------------------------+-------------------+--------------+----------------------+---------------------------------+-------+ |

As you can see above the log table keeps track of what is changed. In this case, it reports that node 1 has wsrep_reject_queries activated, and it will continue to log this until we set wsrep_reject_queries=none.

Conclusions

ProxySQL galera native integration is a useful feature to manage any Galera implementation, no matter whether it's Percona PXC, MariaDB cluster or MySQL/Galera.

The generic approach is obviously a good thing, still it may miss some specific extension like we have in PXC with the performance_schema pxc_cluster_view table.

I've already objected about the failover/failback, and I am here again to remind you: whenever you do a controlled failover REMEMBER to change the weight to prevent an immediate failback.

This is obviously not possible in the case of a real failover, and, for instance, a simple temporary eviction will cause two downtimes instead only one.

Some environments are fine with that others not so. Personally I think there should be a FLAG in the configuration, such that we can decide if failback should be executed or not.

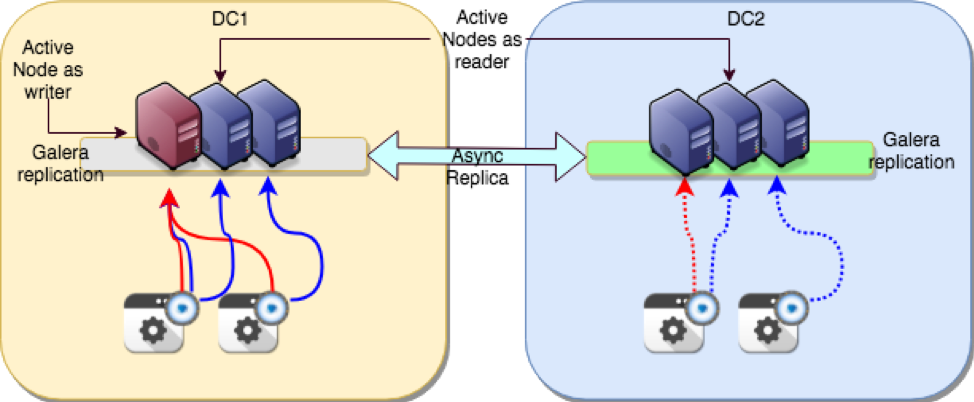

Let's talk about MySQL high availability (HA) and synchronous replication once more.

Let's talk about MySQL high availability (HA) and synchronous replication once more.

MySQL High Availability. Shutterstock.com[/caption]

MySQL High Availability. Shutterstock.com[/caption]