Sharding with ProxySQL

Recently a colleague of mine ask me to provide a simple example on how ProxySQL can perform sharding.

Recently a colleague of mine ask me to provide a simple example on how ProxySQL can perform sharding.

ProxySQL is a very powerful platform that allow us to manipulate and manage our connections and query in a simple but effective way.

In this article I will show you how.

{autotoc enabled=yes}

Before starting is better to clarify some basic concepts.

ProxySQL organize its internal set of servers in Host Groups (HG), each HG can be associate to users and to Query Rules (QR).

Each QR can be final (apply = 1) or can let ProxySQL continue to parse other QR.

A QR can be a rewrite action, or can be a simple match, it can have a specific target HG, or be generic, finally QR are defined using regex.

You can see QR as a sequence of filters and transformation that you can arrange as you like.

These simple basic rules give us enormous flexibility, and allow us to create very simple actions, like a simple query re-write or very complex chains that could see dozens of QR concatenated.

Documentation can be found here

The information related to HG or QR is easily accessible using the the ProxySQL administrator interface, in the tables mysql_servers, mysql_query_rules and stats.stats_mysql_query_rules; the last one allow us to evaluate if and how the rule(s) is used.

About sharding, what ProxySQL can do for us to achieve what we need in a (relatively easy) way?

Some people/company include sharding logic in the application, and use multiple connection to reach the different targets, or have some logic to split the load across several schemas/tables.

ProxySQL allow us to simplify the way connectivity and query distribution is suppose to work reading data in the query or accepting HINTS.

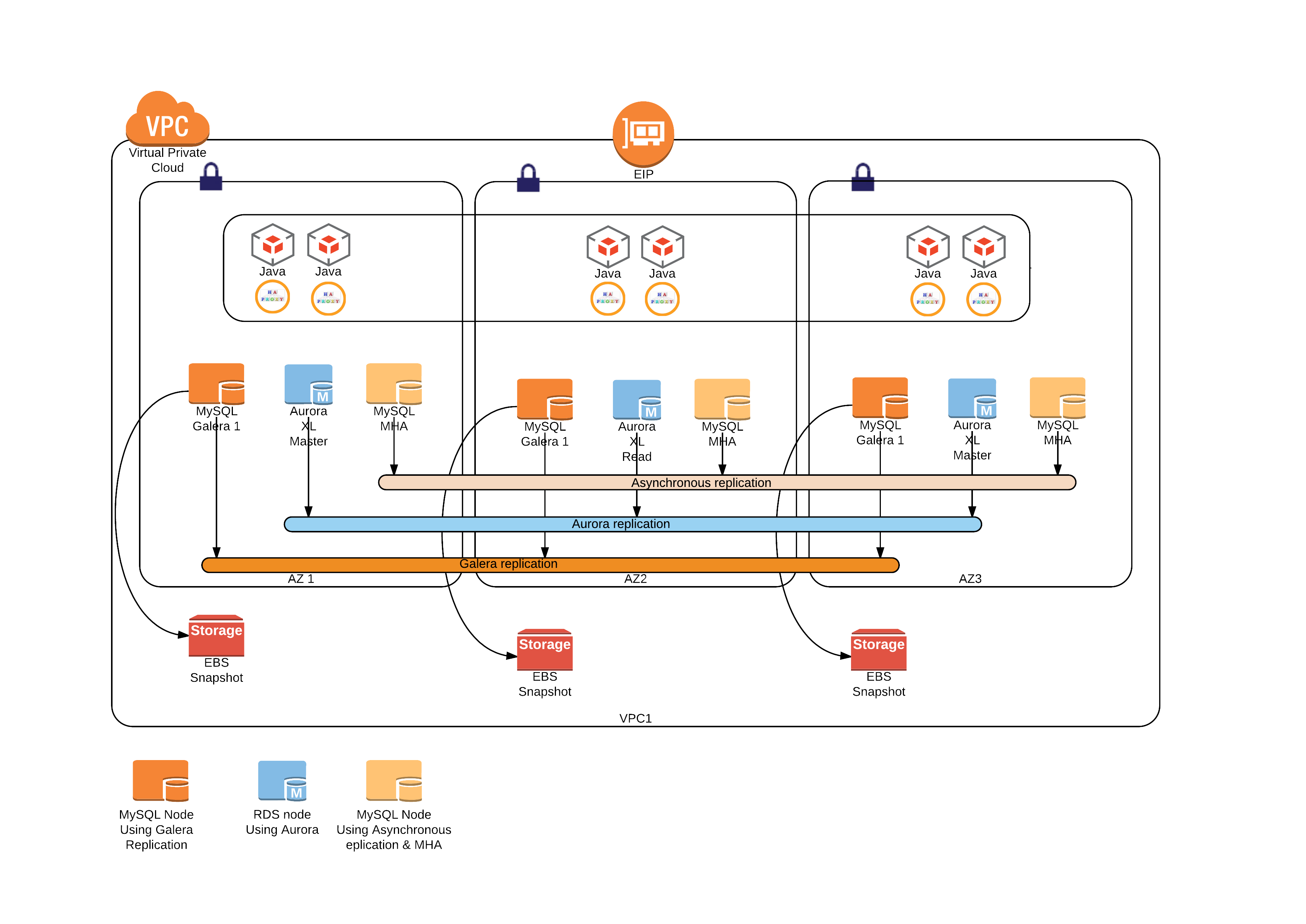

No matter which kind of requirements the sharding exercise can be summarize in few different categories.

- By split the data inside the same container (like having a shard by State where each State is a schema)

- By physical data location (this can have multiple mysql servers in the same room, as well as having them geographically distributed)

- Combination of the two, where I do split by state using a server dedicated and again split by schema/table by whatever (say by gender)

In the following examples I will show how to use ProxySQL to cover the three different scenario defined above and a bit more.

The example below will report text from the Admin ProxySQL interface and from MySQL console.I will mark each one as follow:

- Mc for MySQL console

- Pa for ProxySQL Admin

Please note that mysql console MUST use the -c flag to pass the comments in the query. This because the default behaviour, in mysql console, is to remove the comments.

I am going to illustrate procedures that you can replicate on your laptop.

This because I want you to test directly the ProxySQL functionalities.

For the example describe below I have used PrxySQL v1.2.2 that is going to become the master in few days.

You can download it from :

- git clone https://github.com/sysown/proxysql.git

- git checkout v1.2.2

- Then to compile :

- cd make

- make install

If you need full instructions on how to install and configure ProxySQL than read here and here

Finally you need to have the WORLD test db loaded, world test DB can be found here

First example/exercise is :

Shard inside the same MySQL Server using 3 different schemas split by continent.

Obviously you can have any number of shards and number of relative schemas.

What is relevant here is to demonstrate how traffic can be redirect to different targets (schemas) maintaining the same structure (tables).

This discriminating the target on the base of some relevant information in the Data or pass by the application.

Ok let us roll the ball.

Having :

[Mc] +---------------+-------------+ | Continent | count(Code) | +---------------+-------------+ | Asia | 51 | <-- | Europe | 46 | <-- | North America | 37 | | Africa | 58 | <-- | Oceania | 28 | | Antarctica | 5 | | South America | 14 | +---------------+-------------+

For this exercise you can use single host or multiple servers in replica.

Summarizing you will need:

- 3 hosts: 192.168.1.[5-6-7] (only one needed now but the others are for future use)

- 3 schemas: Continent X + world schema

- 1 user : user_shardRW

- 3 hostgroups: 10, 20, 30 (for future use)

We will create the following Schemas Asia, Africa, Europe first.

[Mc] CREATE schema [Asia|Europe|Africa]; CREATE TABLE Asia.City AS SELECT a.* FROM world.City a JOIN Country ON a.CountryCode = Country.code WHERE Continent='Asia' ; CREATE TABLE Europe.City AS SELECT a.* FROM world.City a JOIN Country ON a.CountryCode = Country.code WHERE Continent='Europe' ; CREATE TABLE Africa.City AS SELECT a.* FROM world.City a JOIN Country ON a.CountryCode = Country.code WHERE Continent='Africa' ; CREATE TABLE Asia.Country AS SELECT * FROM world.Country WHERE Continent='Asia' ; CREATE TABLE Europe.Country AS SELECT * FROM world.Country WHERE Continent='Europe' ; CREATE TABLE Africa.Country AS SELECT * FROM world.Country WHERE Continent='Africa' ;

Create the user:

[Mc] GRANT ALL ON *.* TO user_shardRW@'%' IDENTIFIED BY 'test';

Now let us start to configure the ProxySQL:

[Pa] INSERT INTO mysql_users (username,password,active,default_hostgroup,default_schema) VALUES ('user_shardRW','test',1,10,'test_shard1'); LOAD MYSQL USERS TO RUNTIME;SAVE MYSQL USERS TO DISK; INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.5',10,3306,100); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.6',20,3306,100); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.1.7',30,3306,100); LOAD MYSQL SERVERS TO RUNTIME; SAVE MYSQL SERVERS TO DISK;

With this we have defined the User, the servers and the Host groups.

Let us start to define the logic with the query rules:

[Pa]

DELETE FROM mysql_query_rules WHERE rule_id > 30; INSERT INTO mysql_query_rules (rule_id,active,username,match_pattern,replace_pattern,apply) VALUES (31,1,'user_shardRW',"^SELECT\s*(.*)\s*from\s*world.(\S*)\s(.*).*Continent='(\S*)'\s*(\s*.*)$","SELECT \1 from \4.\2 WHERE 1=1 \5",1); LOAD MYSQL QUERY RULES TO RUNTIME;SAVE MYSQL QUERY RULES TO DISK;

I am now going to query the master (or a single node) but I am expecting ProxySQL to redirect the query to the right shard catching the value of the Continent.

[Mc] SELECT name,population FROM world.City WHERE Continent='Europe' AND CountryCode='ITA' ORDER BY population DESC LIMIT 1; +------+------------+ | name | population | +------+------------+ | Roma | 2643581 | +------+------------+

Well you can say ... "hey you are querying the schema world, of course you get back the correct data".

But this is not what had really happened, ProxySQL did not query the schema world but the schema Europe.

Let see the details:

[Pa] SELECT * FROM stats_mysql_query_digest; Original :SELECT name,population FROM world.City WHERE Continent='Europe' AND CountryCode='ITA' ORDER BY population DESC LIMIT 1; Transformed :SELECT name,population FROM Europe.City WHERE ?=? AND CountryCode=? ORDER BY population DESC LIMIT ?

Let me explain what happened.

Rule 31 in ProxySQL will take all the FIELDS we will pass in the query, it will catch the CONTINENT in the where clause, it will take any condition after the WHERE and it will reorganize the query all using the RegEx.

Does this works for any table in the sharded schemas

Of course it does.

A query like:

SELECT name,population FROM world.Country WHERE Continent='Asia' ;

Will be transformed into:

SELECT name,population FROM Asia.Country WHERE ?=?

[Mc] +----------------------+------------+ | name | population | +----------------------+------------+ | Afghanistan | 22720000 | | United Arab Emirates | 2441000 | | Armenia | 3520000 | <snip ...> | Vietnam | 79832000 | | Yemen | 18112000 | +----------------------+------------+

Another possible a approach to instruct ProxySQL to shard is:

Pass a hint inside a comment.

Let see how.

First let me disable the rule I have just insert, this is not really needed but so you can see how :)

[Pa] mysql> UPDATE mysql_query_rules SET active=0 WHERE rule_id=31; Query OK, 1 row affected (0.00 sec) mysql> LOAD MYSQL QUERY RULES TO RUNTIME;SAVE MYSQL QUERY RULES TO DISK; Query OK, 0 rows affected (0.00 sec)

Done.

Now what I want to have is that *ANY* query that contains comment /* continent=X */ should go to the continent X schema, same server.

To do so, I will instruct ProxySQL to replace any reference to the world schema inside the the query I am going to submit.

[Pa] DELETE FROM mysql_query_rules WHERE rule_id IN (31,33,34,35,36); INSERT INTO mysql_query_rules (rule_id,active,username,match_pattern,replace_pattern,apply,FlagOUT,FlagIN) VALUES (31,1,'user_shardRW',"\S*\s*\/\*\s*continent=.*Asia\s*\*.*",NULL,0,23,0); INSERT INTO mysql_query_rules (rule_id,active,username,match_pattern,replace_pattern,apply,FlagIN,FlagOUT) VALUES (32,1,'user_shardRW','world.','Asia.',0,23,23); INSERT INTO mysql_query_rules (rule_id,active,username,match_pattern,replace_pattern,apply,FlagOUT,FlagIN) VALUES (33,1,'user_shardRW',"\S*\s*\/\*\s*continent=.*Europe\s*\*.*",NULL,0,25,0); INSERT INTO mysql_query_rules (rule_id,active,username,match_pattern,replace_pattern,apply,FlagIN,FlagOUT) VALUES (34,1,'user_shardRW','world.','Europe.',0,25,25); INSERT INTO mysql_query_rules (rule_id,active,username,match_pattern,replace_pattern,apply,FlagOUT,FlagIN) VALUES (35,1,'user_shardRW',"\S*\s*\/\*\s*continent=.*Africa\s*\*.*",NULL,0,24,0); INSERT INTO mysql_query_rules (rule_id,active,username,match_pattern,replace_pattern,apply,FlagIN,FlagOUT) VALUES (36,1,'user_shardRW','world.','Africa.',0,24,24); LOAD MYSQL QUERY RULES TO RUNTIME;SAVE MYSQL QUERY RULES TO DISK;

How this works?

I have defined mainly to concatenated rules.

The first capture the incoming query that contains the desired value (like continent = Asia).

If the match is there ProxySQL will exit that action, but while doing so it will read the Apply field and if Apply is 0 it will read the FlagOUT value. At this point it will go to the first rule (in sequence) that has the value of FlagIN equal to the FlagOUT.

The second rule will get the request and will replace the value of world with the one I have define.

In short it will replace whatever is in the match_pattern with the value that is in the replace_pattern.

Now what happens here is that ProxySQL implement the Re2 google library for RegEx.

Re2 is very fast but has some limitations, like it does NOT support (at the time of the writing) the flag option g.

In other words if I have a select with many tables and as such several "world." Re2 will replace ONLY the first instance.

As such a query like:

SELECT /* continent=Europe */ * FROM world.Country JOIN world.City ON world.City.CountryCode=world.Country.Code WHERE Country.code='ITA' ;

Will be transformed into :

SELECT /* continent=Europe */ * FROM Europe.Country JOIN world.City ON world.City.CountryCode=world.Country.Code WHERE Country.code='ITA' ;

And fail.

The other day with Rene' we were discussing how to solve this given the lack of implementation in Re2. Finally we had opted for recursive actions.

What this means?

It means that ProxySQL from v1.2.2 now has a new functionality that allow recursive calls to a Query Rule, the maximum number of iterations that ProxySQL can run, is managed by the option (global variable) mysql-query_processor_iterations.

Mysql-query_processor_iterations define how many operation, a query process can execute as whole (from start to end).

This new implementation allow us to reference a Query Rule to itself in order to be executed multiple times.

If you go back you will noticed that QR 34 has FlagIN and FlagOUT pointing to the same value of 25 and Apply =0.

This will bring ProxySQL to recursively call rule 34 until it change ALL the value of the word world.

The result is the following:

[Mc] SELECT /* continent=Europe */ Code, City.Name, City.population FROM world.Country JOIN world.City ON world.City.CountryCode=world.Country.Code WHERE City.population > 10000 GROUP BY Name ORDER BY City.Population DESC LIMIT 5; +------+---------------+------------+ | Code | Name | population | +------+---------------+------------+ | RUS | Moscow | 8389200 | | GBR | London | 7285000 | | RUS | St Petersburg | 4694000 | | DEU | Berlin | 3386667 | | ESP | Madrid | 2879052 | +------+---------------+------------+

You can see ProxySQL internal information using the following queries:

[Pa] SELECT active,hits, mysql_query_rules.rule_id, match_digest, match_pattern, replace_pattern, cache_ttl, apply,flagIn,flagOUT FROM mysql_query_rules NATURAL JOIN stats.stats_mysql_query_rules ORDER BY mysql_query_rules.rule_id; +--------+------+---------+---------------------+----------------------------------------+-----------------+-----------+-------+--------+---------+ | active | hits | rule_id | match_digest | match_pattern | replace_pattern | cache_ttl | apply | flagIN | flagOUT | +--------+------+---------+---------------------+----------------------------------------+-----------------+-----------+-------+--------+---------+ | 1 | 1 | 33 | NULL | \S*\s*\/\*\s*continent=.*Europe\s*\*.* | NULL | NULL | 0 | 0 | 25 | <-- | 1 | 4 | 34 | NULL | world. | Europe. | NULL | 0 | 25 | 25 | <-- | 1 | 0 | 35 | NULL | \S*\s*\/\*\s*continent=.*Africa\s*\*.* | NULL | NULL | 0 | 0 | 24 | | 1 | 0 | 36 | NULL | world. | Africa. | NULL | 0 | 24 | 24 | +--------+------+---------+---------------------+----------------------------------------+-----------------+-----------+-------+--------+---------+

And

[Pa] SELECT * FROM stats_mysql_query_digest; <snip AND taking only digest_text> SELECT Code, City.Name, City.population FROM Europe.Country JOIN Europe.City ON Europe.City.CountryCode=Europe.Country.Code WHERE City.population > ? GROUP BY Name ORDER BY City.Population DESC LIMIT ?

As you can see ProxySQL has nicely replace the word world.

And executed only on the desired schema.

How I can shard redirecting the queries to an Host?

(Instead of a schema)This is even easier :)

The main point is that whatever match the rule, should go to a defined HG.No rewrite imply which means less work.

So how this is done?As said before I have 3 NODES 192.168.1.[5-6-7]For this example I will use world db (no continent schema), distributed in each node, and I wil retrieve the node bind IP to be sure I am going on the right place.

What I will do is to instruct ProxySQL to send my query by using a HINT to a specific host.

I choose the hint "shard_host_HG" and I am going to inject it in the query as comment.

As such the Query Rules will be:

[Pa] DELETE FROM mysql_query_rules WHERE rule_id IN (40,41,42, 10,11,12); INSERT INTO mysql_query_rules (rule_id,active,username,match_pattern,destination_hostgroup,apply) VALUES (10,1,'user_shardRW',"\/\*\s*shard_host_HG=.*Europe\s*\*.",10,0); INSERT INTO mysql_query_rules (rule_id,active,username,match_pattern,destination_hostgroup,apply) VALUES (11,1,'user_shardRW',"\/\*\s*shard_host_HG=.*Asia\s*\*.",20,0); INSERT INTO mysql_query_rules (rule_id,active,username,match_pattern,destination_hostgroup,apply) VALUES (12,1,'user_shardRW',"\/\*\s*shard_host_HG=.*Africa\s*\*.",30,0); LOAD MYSQL QUERY RULES TO RUNTIME;SAVE MYSQL QUERY RULES TO DISK;

While the queries I am going to test are:

[Mc] SELECT /* shard_host_HG=Europe */ City.Name, City.Population FROM world.Country JOIN world.City ON world.City.CountryCode=world.Country.Code WHERE Country.code='ITA' LIMIT 5; SELECT * /* shard_host_HG=Europe */ FROM information_schema.GLOBAL_VARIABLES WHERE variable_name LIKE 'bind%'; SELECT /* shard_host_HG=Asia */ City.Name, City.Population FROM world.Country JOIN world.City ON world.City.CountryCode=world.Country.Code WHERE Country.code='IND' LIMIT 5; SELECT * /* shard_host_HG=Asia */ FROM information_schema.GLOBAL_VARIABLES WHERE variable_name LIKE 'bind%'; SELECT /* shard_host_HG=Africa */ City.Name, City.Population FROM world.Country JOIN world.City ON world.City.CountryCode=world.Country.Code WHERE Country.code='ETH' LIMIT 5; SELECT * /* shard_host_HG=Africa */ FROM information_schema.GLOBAL_VARIABLES WHERE variable_name LIKE 'bind%';

Running the query for Africa, I will get:

[Mc] SELECT /* shard_host_HG=Africa */ City.Name, City.Population FROM world.Country JOIN world.City ON world.City.CountryCode=world.Country.Code WHERE Country.code='ETH' LIMIT 5; SELECT * /* shard_host_HG=Africa */ FROM information_schema.GLOBAL_VARIABLES WHERE variable_name LIKE 'bind%'; +-------------+------------+ | Name | Population | +-------------+------------+ | Addis Abeba | 2495000 | | Dire Dawa | 164851 | | Nazret | 127842 | | Gonder | 112249 | | Dese | 97314 | +-------------+------------+ +---------------+----------------+ | VARIABLE_NAME | VARIABLE_VALUE | +---------------+----------------+ | BIND_ADDRESS | 192.168.1.7 | +---------------+----------------+

That will give me :

[Pa] SELECT active,hits, mysql_query_rules.rule_id, match_digest, match_pattern, replace_pattern, cache_ttl, apply,flagIn,flagOUT FROM mysql_query_rules NATURAL JOIN stats.stats_mysql_query_rules ORDER BY mysql_query_rules.rule_id; +--------+------+---------+---------------------+----------------------------------------+-----------------+-----------+-------+--------+---------+ | active | hits | rule_id | match_digest | match_pattern | replace_pattern | cache_ttl | apply | flagIN | flagOUT | +--------+------+---------+---------------------+----------------------------------------+-----------------+-----------+-------+--------+---------+ | 1 | 0 | 40 | NULL | \/\*\s*shard_host_HG=.*Europe\s*\*. | NULL | NULL | 0 | 0 | 0 | | 1 | 0 | 41 | NULL | \/\*\s*shard_host_HG=.*Asia\s*\*. | NULL | NULL | 0 | 0 | 0 | | 1 | 2 | 42 | NULL | \/\*\s*shard_host_HG=.*Africa\s*\*. | NULL | NULL | 0 | 0 | 0 | <-- Note the HITS (2 as the run queries) +--------+------+---------+---------------------+----------------------------------------+-----------------+-----------+-------+--------+---------+

In this example we have NO replace_pattern this is only a matching and redirecting Rule, where the destination HG is defined in the value of destination_hostgroup attribute while inserting.

In the case for Africa is HG 30.

The server in HG 30 is:

[Pa] SELECT hostgroup_id,hostname,port,STATUS FROM mysql_servers ; +--------------+-------------+------+--------+ | hostgroup_id | hostname | port | STATUS | +--------------+-------------+------+--------+ | 10 | 192.168.1.5 | 3306 | ONLINE | | 20 | 192.168.1.6 | 3306 | ONLINE | | 30 | 192.168.1.7 | 3306 | ONLINE | <--- +--------------+-------------+------+--------+

Which match perfectly with our returned value.

You can try by your own the other two continents.

Using destination_hostgroup

Another way to assign to which final host a query should go is to use the the destination_hostgroup, set the Schema_name attribute and use the use schema syntax in the query.

like:

[Pa] INSERT INTO mysql_query_rules (active,schemaname,destination_hostgroup,apply) VALUES (1, 'shard00', 1, 1), (1, 'shard01', 1, 1), (1, 'shard03', 1, 1), (1, 'shard04', 2, 1), (1, 'shard06', 2, 1), (1, 'shard06', 2, 1), (1, 'shard07', 3, 1), (1, 'shard08', 3, 1), (1, 'shard09', 3, 1);

And then in the query do something like :

USE shard02; SELECT * FROM tablex;

I mention this method because is one of the most common at the moment in large companies using SHARDING.

But it is not safe, because it relays on the fact the query will be execute in the desired HG.

While the risk of error is high.

Just think if a query doing join against a specified SHARD:

USE shard01; SELECT * FROM tablex JOIN shard03 ON tablex.id = shard03.tabley.id;

This will probably generate an error because shard03 is probably NOT present on the host containing shar01.

As such this approach can be used ONLY when you are 100% sure about what you are doing and when you are sure NO query will have explicit schema declaration.

Shard By Host and by Schema

Finally is obviously possible to combine the two approaches sharding by host and have only a subset of schemas

To do so let us use all the 3 nodes and have the schema distribute as follow:

- Europe on Server 192.168.1.5 -> HG 10

- Asia on Server 192.168.1.6 -> HG 20

- Africa on Server 192.168.1.7 -> HG 30

I have already set the query rules both using HINT so what I have to do is to use them BOTH to combine the operations:

[Mc] SELECT /* shard_host_HG=Asia */ /* continent=Asia */ City.Name, City.Population FROM world.Country JOIN world.City ON world.City.CountryCode=world.Country.Code WHERE Country.code='IND' LIMIT 5; SELECT * /* shard_host_HG=Asia */ FROM information_schema.GLOBAL_VARIABLES WHERE variable_name LIKE 'bind%'; +--------------------+------------+ | Name | Population | +--------------------+------------+ | Mumbai (Bombay) | 10500000 | | Delhi | 7206704 | | Calcutta [Kolkata] | 4399819 | | Chennai (Madras) | 3841396 | | Hyderabad | 2964638 | +--------------------+------------+ 5 rows IN SET (0.00 sec) +---------------+----------------+ | VARIABLE_NAME | VARIABLE_VALUE | +---------------+----------------+ | BIND_ADDRESS | 192.168.1.6 | +---------------+----------------+ 1 row IN SET (0.01 sec)

[Pa] mysql> SELECT digest_text FROM stats_mysql_query_digest; +--------------------------------------------------------------------------------------------------------------------------------------------+ | digest_text | +--------------------------------------------------------------------------------------------------------------------------------------------+ | SELECT * FROM information_schema.GLOBAL_VARIABLES WHERE variable_name LIKE ? | | SELECT City.Name, City.Population FROM Asia.Country JOIN Asia.City ON Asia.City.CountryCode=Asia.Country.Code WHERE Country.code=? LIMIT ? | +--------------------------------------------------------------------------------------------------------------------------------------------+ 2 rows IN SET (0.00 sec) mysql> SELECT active,hits, mysql_query_rules.rule_id, match_digest, match_pattern, replace_pattern, cache_ttl, apply,flagIn,flagOUT FROM mysql_query_rules NATURAL JOIN stats.stats_mysql_query_rules ORDER BY mysql_query_rules.rule_id; +--------+------+---------+---------------------+----------------------------------------+-----------------+-----------+-------+--------+---------+ | active | hits | rule_id | match_digest | match_pattern | replace_pattern | cache_ttl | apply | flagIN | flagOUT | +--------+------+---------+---------------------+----------------------------------------+-----------------+-----------+-------+--------+---------+ | 1 | 0 | 10 | NULL | \/\*\s*shard_host_HG=.*Europe\s*\*. | NULL | NULL | 0 | 0 | NULL | | 1 | 2 | 11 | NULL | \/\*\s*shard_host_HG=.*Asia\s*\*. | NULL | NULL | 0 | 0 | NULL | | 1 | 0 | 12 | NULL | \/\*\s*shard_host_HG=.*Africa\s*\*. | NULL | NULL | 0 | 0 | NULL | | 1 | 0 | 13 | NULL | NULL | NULL | NULL | 0 | 0 | 0 | | 1 | 1 | 31 | NULL | \S*\s*\/\*\s*continent=.*Asia\s*\*.* | NULL | NULL | 0 | 0 | 23 | | 1 | 4 | 32 | NULL | world. | Asia. | NULL | 0 | 23 | 23 | | 1 | 0 | 33 | NULL | \S*\s*\/\*\s*continent=.*Europe\s*\*.* | NULL | NULL | 0 | 0 | 25 | | 1 | 0 | 34 | NULL | world. | Europe. | NULL | 0 | 25 | 25 | | 1 | 0 | 35 | NULL | \S*\s*\/\*\s*continent=.*Africa\s*\*.* | NULL | NULL | 0 | 0 | 24 | | 1 | 0 | 36 | NULL | world. | Africa. | NULL | 0 | 24 | 24 | +--------+------+---------+---------------------+----------------------------------------+-----------------+-----------+-------+--------+---------+

As you can see rule 11 has two HITS, which means my queries will go to the associated HG.

But given Apply for rule 11 is =0, ProxySQL will first continue to process the QueryRules.

As such it will also transform the queries as for rules 31 and 32, each one having the expected number of hits (1 the first and the 4 because the loop, the second).

How to shard base on a MOD

to shard base on a MOD value Like handle table sharding based on the mod of the user id?

such that `insert into 'foo' ('user_id', 'name') values (180, 'bar')` becomes `insert into 'foo_X ('user_id', 'name') values (180, 'bar')` based on 180 % 25, where 25 is the number of sharded tables.

Based on 180 % 25, where 25 is the number of sharded tables.

Solution is to create X number of query rules to manage the MOD value:

INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(00|25|50|75),","INSERT INTO foo_00 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(01|26|51|76),","INSERT INTO foo_01 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(02|27|52|77),","INSERT INTO foo_02 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(03|28|53|78),","INSERT INTO foo_03 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(04|29|54|79),","INSERT INTO foo_04 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(05|30|55|80),","INSERT INTO foo_05 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(06|31|56|81),","INSERT INTO foo_06 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(07|32|57|82),","INSERT INTO foo_07 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(08|33|58|83),","INSERT INTO foo_08 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(09|34|59|84),","INSERT INTO foo_09 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(10|35|60|85),","INSERT INTO foo_10 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(11|36|61|86),","INSERT INTO foo_11 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(12|37|62|87),","INSERT INTO foo_12 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(13|38|63|88),","INSERT INTO foo_13 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(14|39|64|89),","INSERT INTO foo_14 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(15|40|65|90),","INSERT INTO foo_15 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(16|41|66|91),","INSERT INTO foo_16 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(17|42|67|92),","INSERT INTO foo_17 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(18|43|68|93),","INSERT INTO foo_18 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(19|44|69|94),","INSERT INTO foo_19 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(20|45|70|95),","INSERT INTO foo_20 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(21|46|71|96),","INSERT INTO foo_21 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(22|47|72|97),","INSERT INTO foo_22 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(23|48|73|98),","INSERT INTO foo_23 (user_id,name) VALUES(\1\2,",1); INSERT INTO mysql_query_rules (active, match_pattern, replace_pattern, apply) VALUES (1, "INSERT INTO foo \(user_id,name\) VALUES \((\d+)(24|49|74|99),","INSERT INTO foo_24 (user_id,name) VALUES(\1\2,",1); LOAD MYSQL QUERY RULES TO RUNTIME;

As you can see below this solution allow the transparent assignment using MOD 25

1 2 3 4 5 6 7 |

mysql> CREATE TABLE foo_00 (user_id INT, name VARCHAR(30)); mysql> CREATE TABLE foo_01 (user_id INT, name VARCHAR(30)); mysql> CREATE TABLE foo_02 (user_id INT, name VARCHAR(30)); mysql> CREATE TABLE foo_03 (user_id INT, name VARCHAR(30)); mysql> CREATE TABLE foo_04 (user_id INT, name VARCHAR(30)); mysql> INSERT INTO foo (user_id,name) VALUES (1000,"nameA"); mysql> INSERT INTO foo (user_id,name) VALUES (7456076,"nameB"); |

SELECT * FROM foo_00; +---------+-------+ | user_id | name | +---------+-------+ | 1000 | nameA | +---------+-------+ mysql> SELECT * FROM foo_01; +---------+-------+ | user_id | name | +---------+-------+ | 7456076 | nameB | +---------+-------+

Credits

It is obvious that I need to acknowledge and kudo the work Rene' Cannao is doing to make ProxySQL a solid, fast and flexible product.

I have also to mention that I was and am working with him very often, more often than he likes, asking him fix and discussing with him optimization.

Requests that he try to satisfied with surprising speed and efficiency.

Reference

https://github.com/sysown/proxysql/tree/v1.2.2/doc

https://github.com/google/re2/wiki/Syntax

http://www.proxysql.com/2015/09/proxysql-tutorial-setup-in-mysql.html

https://github.com/sysown/proxysql/blob/v1.2.2/doc/configuration_howto.md

https://github.com/sysown/proxysql/blob/v1.2.2/INSTALL.md

https://dev.mysql.com/doc/index-other.html